Blog Content

Introduction

In today's digital ecosystem, every company generates a huge amount of data wether it's user activity, transactions, logs, or data from external sources. But raw data is not very useful in its original form. It can be messy, incomplete, and scattered across different systems.

To make smart business decisions, companies need data that is clean, organized, and easy to analyze.

This is where ETL pipeline come in.

ETL pipeline help business take raw data, clean and organize it, and store in a way that can be used for reporting, dashboards, and analytics.

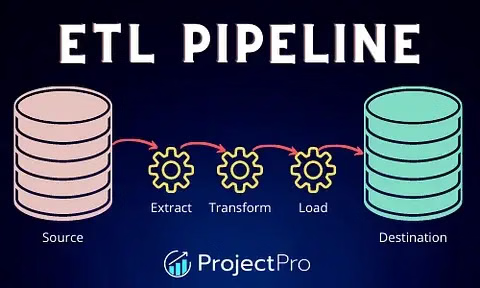

What is ETL?

ETL stands for:

- Extract → Collect data from different sources

- Transform → Clean and process the data

- Load → Store the data in a database or data warehouse

In simple words, ETL is a process that turns messy data into useful information.

How ETL Works

- Data is collected from different sources

- It is cleaned and processed

- Then stored in a central system

- Finally, used in dashboards or reports

Step-by-Step Explanation

Extract (Getting the Data)

In this step, data is collected from different places like:

- Databases (PostgreSQL, MySQL)

- APIs (like payment gateways or apps)

- Files (CSV, Excel, logs)

- Cloud storagee (AWS S3, Google Cloud)

Problem here:

Data comes in different formats and large volumes, so handling it properly is important

Tools commonly used for extraction include tools like Fivetran and Stitch, which automatically pull data from applications and databases. For handling large-scale data transfers, tools like Apache Sqoop are also used.

Transform (Cleaning the Data)

This is the most important step.

Here we:

- Remove duplicate data

- Fix missing values

- Convert formats (like date, currency)

- Combine data from multiple sources

- Filter unnecessary data

After this step, data becomes clean and reliable.

For transforming data, companies often use powerful tools like Apache Spark for large datasets and dbt for SQL-based transformations in data warehouses.

Load (Saving the Data)

Now the cleaned data is stored in systems like:

- Data warehouse (BigQuery, Snowflake, Redshift)

- Database (PostgreSQL)

- Data lake

There are two ways to load data:

- Full Load → Load all data every time

- Incremental Load → Load only new or changed data

Data loading is handled using tools like Apache NiFi and Airbyte, which help move processed data into data warehouses or storage systems.

Real-World Example

Let's take an e-commerce company:

- Extract → Data comes from website orders, payments, and user activity

- Transform → Remove duplicates, fix missing values, standardize currency

- Load → Store data in a warehouse

Now the company can:

- Track sales

- Understand customer behavior

- Monitor inventory

Basic ETL Architecture

A simple ETL system includes:

- Data Sources → Where data comes from

- ETL Process → Where data is cleaned and processes

- Staging Area → Temporary storage

- Data Warehouse → Final storage

- BI Tools → Dashboards (Power BI, Tableau)

ETL vs ELT

| ETL | ELT |

| Data is cleaned before storing | Data is stored first, then cleaned |

| Works well in traditional systems | Used in modern cloud systems |

| Slower for big data | Faster with modern tools |

Popular ETL Tools

Some widely used ETL tools in the industry include:

- Apache Airflow :- used to schedule and manage ETL pipelines

- Talend :- user-friendly ETL tool with GUI

- Informatica PowerCenter :- widely used in large enterprises

- Microsoft Power BI :- used for dashboards and reporting

- Tableau :- popular BI tools for analytics

These tools help automate and simplify the ETL process.

Why ETL is Important

ETL pipeline help companies:

- Combine data from multiple sources

- Improve data quality

- Make better decisions

- Build dashboards and reports

- Automate data workflows

Challenges in ETL

Even though ETL is powerful, it has some challenges:

- Handling large data

- Keeping pipelines running without failure

- Managing complex transformations

- Ensuring data security

Conclusion

ETL pipelines are an essential part of modern data systems. They help convert raw, messy data into meaningful insights that businesses can use to grow and improve.

Transform Your Digital Presence

With Expert Engineering

We build high-performance web applications, mobile apps, and AI-driven systems. Let's discuss how we can help you achieve measurable growth.